FLUX Consistent Style: How to Lock Your Aesthetic

FLUX generates stunning images but maintaining a consistent style across prompts requires deliberate technique. Here's how to lock your aesthetic in FLUX and make it portable.FLUX produces some of the strongest AI-generated images available. Getting a consistent style across multiple prompts is a different challenge entirely.

If you have found your FLUX aesthetic — the exact palette, lighting, texture, and mood that defines your visual identity — the next step is keeping it. Flux consistent style is not built into the tool. Unlike Midjourney's --sref parameter and style reference tools, FLUX has no native style reference feature. Consistency is something you build on top of FLUX, not something it gives you.

FLUX is a text-to-image AI model developed by Black Forest Labs, known for high visual quality and strong prompt adherence. Maintaining consistent style in FLUX requires a structured approach because, unlike Midjourney, FLUX lacks built-in style reference parameters. Style consistency is achieved through explicit text-based style specifications, seed control, and prompt architecture.

To get consistent results in FLUX, create a text-based style specification that defines your target visual attributes — palette, lighting, composition, texture, and mood — and prepend it to every generation prompt. Unlike Midjourney's --sref parameter, FLUX relies on text as the primary style constraint, which makes explicit, detailed specifications more effective than visual references alone.

This guide covers three methods for locking your FLUX aesthetic — from seed control (limited but useful) to a portable style specification that works across FLUX, Midjourney, DALL-E, and any other text-guided image model.

FLUX's Strengths and Why Consistent Style Requires Extra Work

FLUX (from Black Forest Labs) stands out for two reasons: exceptional prompt adherence and high photorealistic quality. FLUX Pro in particular renders textures, lighting, and material surfaces with a precision that makes it a serious tool for professional visual work.

These strengths create the consistency paradox. FLUX follows text descriptions so faithfully that even small prompt changes cascade into significant visual differences. Change your subject from "a ceramic vase" to "a glass bottle" and the entire image shifts — lighting, background treatment, composition, color balance. The model reinterprets the full prompt, not just the part you changed.

What "flux consistent style" means in practice: you want ten images with different subjects and compositions that all feel like they belong to the same visual world. Same palette. Same lighting logic. Same texture treatment. Same mood. FLUX does not do this by default.

The other critical difference: FLUX has no --sref parameter, no style tuner, no visual style reference system. Every generation starts from text alone. This means your consistency system must be text-based — which, as it turns out, has advantages.

Method 1: Using Seeds for Reproducibility (Limited Use)

A seed is a number that determines the random starting state of image generation. Same seed plus same prompt equals the same image. Most FLUX interfaces — Replicate, fal.ai, ComfyUI, the Black Forest Labs API — let you set a seed value.

Seeds are useful for perfecting a single image. You iterate on a seed until the result matches your intent, then lock it for a reproducible output.

The critical limitation: Seeds only work for identical prompts. Change the subject, add a new element, or alter any prompt component and the style changes significantly. Seeds fix noise initialization, not style. They provide reproducibility, not consistency.

Best for: Product photography variants with identical setup, single-subject repeatability, or testing prompt variations on a single composition.

Not a solution for: Campaign consistency across different subjects, multi-session style maintenance, or collaborative workflows where others need to match your aesthetic.

Method 2: Style Prefix Prompts (Better, Still Fragile)

The prefix approach separates style from content in your prompt. You write a detailed style description and prepend it to every FLUX generation:

[Style description block] — [Content description]

Example:

Soft diffused studio lighting, cool color palette, minimal negative

space, photographic texture. High resolution. — A ceramic coffee

mug on a stone countertop

FLUX responds well to detailed text prefixes because of its strong prompt adherence. Unlike some image models where long prompts get diluted, FLUX parses detailed style descriptions with reasonable fidelity.

Practical tips for FLUX prefixes:

- Put style before content. FLUX, like most language-guided models, tends to weight earlier tokens more heavily. Lead with your visual rules. Front-load lighting — it is the single highest-impact quality factor for FLUX output.

- Use specific technical language. "Soft directional light from upper-left, 5200K color temperature" outperforms "warm natural lighting" for consistency. Use hex color codes (e.g.,

hex #8B8B8B) instead of named colors — FLUX renders them with high accuracy. - Write in natural prose, not labeled blocks. FLUX was trained on descriptive image captions. Flowing descriptions like "soft directional daylight, warm ivory and cool gray palette" parse more reliably than

LIGHTING: soft directional daylight. Save the labeled-section format for ChatGPT. - Copy-paste exactly. Even minor rephrasing changes how FLUX processes the prefix. Never rewrite your style block from memory.

The limitation: This is still manual, unvalidated, and requires prompt engineering skill. You must remember to paste the prefix, you cannot easily tell when it has drifted, and the effectiveness depends on how well the style text is written.

What Vocabulary Works Best in FLUX Style Specifications

FLUX parses certain categories of descriptive language with higher fidelity than others. This reference covers what works reliably — a practical edge most FLUX guides do not cover.

Lighting language FLUX handles precisely:

- Color temperature: "5600K daylight," "3200K tungsten warmth," "mixed ambient and tungsten"

- Direction: "hard side light from camera-left," "top-down flat light," "rim lighting from behind"

- Quality: "diffuse fill, no hard shadows," "high contrast, single source," "softbox-quality diffusion"

- Ratios: "2:1 key to fill ratio," "high contrast, deep shadows"

Palette language:

- Named palettes: "desaturated earth tones," "high-key neutral palette," "cool steel and warm ivory"

- Explicit exclusions: "no warm reds or orange tones," "avoid saturated colors"

- Value range: "compressed dynamic range, no pure blacks," "high key, bright midtones"

Composition language:

- Rule of thirds: "subject placed on left third," "strong diagonal composition"

- Negative space: "minimal subject footprint, maximum negative space"

- Perspective: "eye-level perspective," "slight low-angle looking up," "drone top-down"

What does NOT work reliably:

- Evocative emotional language without anchoring. "Melancholic" works better paired with "desaturated, cool palette, still air" than alone.

- Vague superlatives. "Extremely detailed" and "incredibly realistic" produce noise. Use "high-resolution texture" or "visible material grain" instead.

- Competing style signals. FLUX weights each signal, and unexpected blends occur when contradictory style cues exist in the same block.

This is exactly the problem StyleRef solves — build your style spec in 60 seconds →

Method 3: A Structured Style Specification for FLUX Consistency

A structured style specification captures your visual aesthetics as a reusable document — but unlike ChatGPT (where a HARD CONSTRAINT header and labeled sections work best), FLUX responds better to natural prose with technical precision. The format matters: FLUX parses flowing descriptive text with higher fidelity than rigid labeled blocks.

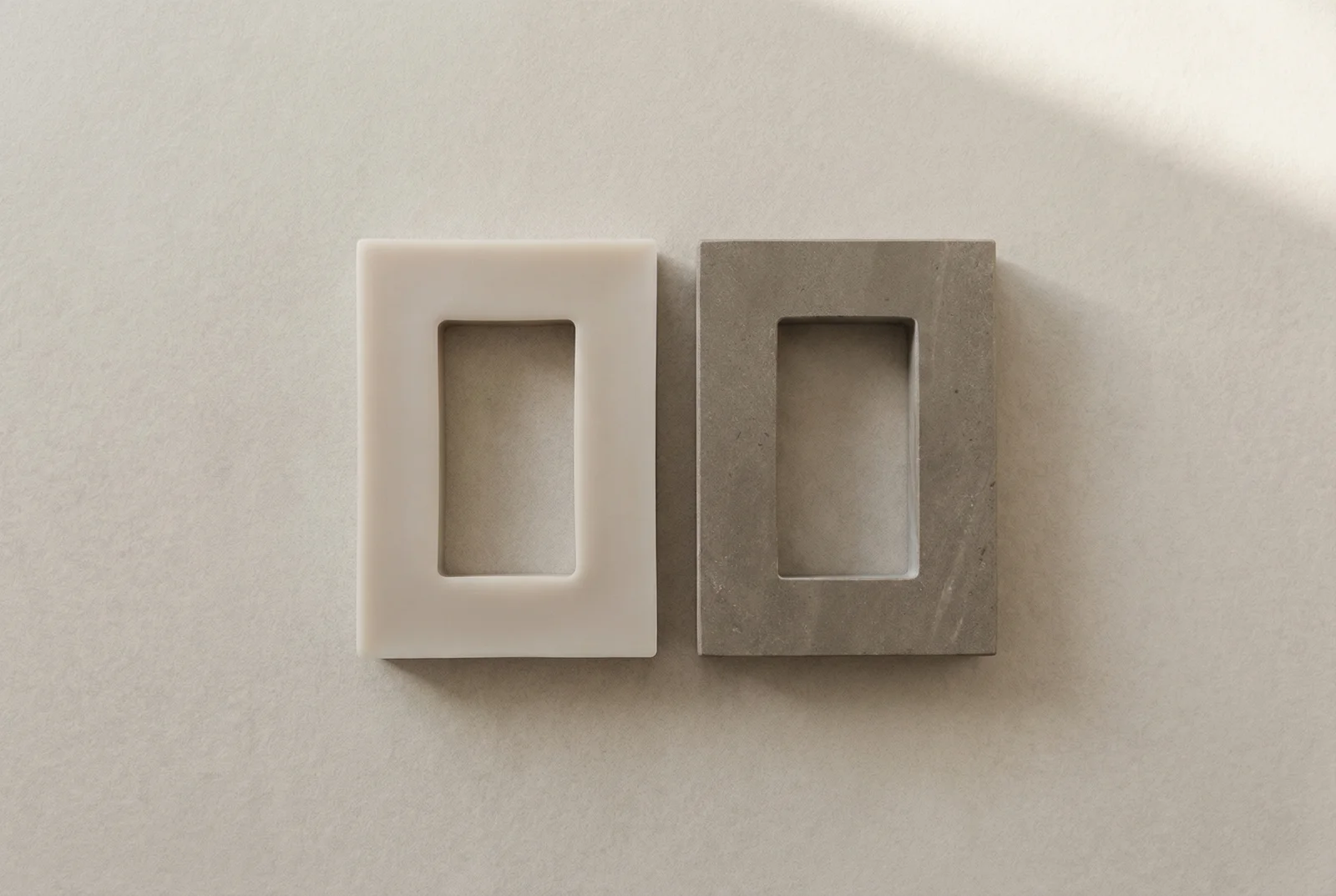

Here is what a FLUX-optimized style spec looks like:

[YOUR SUBJECT/SCENE PROMPT HERE].

Soft directional daylight from upper left, 5200K color temperature,

0.6 fill ratio, no specular highlights on subjects.

Color palette: cool stone gray hex #8B8B8B, warm ivory hex #F5F0E8,

deep navy accent hex #1A1A40, 20-50% saturation, warm color temperature.

Left-third subject placement, minimum 30% negative space,

eye-level perspective, square or vertical crop composition.

High-resolution photographic texture, visible material surface quality.

Style: photographic, eye-level accuracy.

Mood: quiet, contemplative, low energy, restrained.

-- Style definition optimized for FLUX. Generated by StyleRef.io

Notice the differences from a ChatGPT-style spec: no HARD CONSTRAINT header (FLUX does not process that framing), hex color codes inline (FLUX renders specific hex values with high accuracy), natural comma-separated prose instead of labeled blocks, and a subject placeholder at the top — because FLUX weights earlier tokens more heavily.

StyleRef generates this FLUX-native format automatically. When you export a StyleRef for FLUX, it restructures your style blocks into this prose format — front-loading lighting (the highest-impact quality factor for FLUX), embedding hex colors inline, and using Style/Mood annotations at the end that FLUX parses reliably.

Why this works:

- Natural prose maps to FLUX's text parsing. FLUX was trained on descriptive image captions, not structured instructions. Flowing text matches its training distribution better than rigid labeled sections.

- Hex colors inline produce accurate palette reproduction. FLUX renders

hex #8B8B8Bmore consistently than "cool gray" — the specificity eliminates interpretation variance. - Front-loaded dimensions get more weight. Lighting and atmosphere first, mood annotations last — this ordering matches how FLUX allocates attention across the prompt.

- Maintainable and auditable. When style drifts, you can identify exactly which descriptive phrase needs adjustment.

Building your spec from FLUX outputs: Generate 5–6 FLUX images that represent your target aesthetic. Use StyleRef to extract the visual attributes from those images — it analyzes palette, lighting, composition, and mood automatically, then formats the output in the FLUX-native prose style. Review, adjust, and you have a production-ready FLUX style spec.

The portability advantage: StyleRef stores your style as structured blocks internally — then generates the right format for each tool. The same style exports as natural prose for FLUX, as a HARD CONSTRAINT spec for ChatGPT and Claude, and as keywords plus --sref parameters for Midjourney. Update your style once and every format updates. If you need to define your brand voice once for every AI tool, this is how it works. For a deeper look at AI art direction principles, the structured specification serves as the foundation.

FLUX vs. Midjourney vs. DALL-E: Style Consistency Compared

| Feature | FLUX | Midjourney | DALL-E 3 |

|---|---|---|---|

| Built-in style reference | No native parameter | --sref image reference | No native parameter |

| Text prompt adherence | Very high | High | Moderate |

| Seed reproducibility | Exact (same prompt) | Exact (same prompt) | Limited |

| Cross-model portability | Text spec required | --sref is Midjourney-only | Text spec required |

| Best consistency method | Structured text spec | --sref + text spec | Text spec |

| Style vocabulary precision | Excellent for technical terms | Good, some reinterpretation | Moderate |

| Photorealistic quality | Excellent (Pro) | Excellent (v6+) | Good |

FLUX's lack of a native style reference tool is a constraint — but it also means FLUX users build text-based systems from the start. Those text-based systems are inherently more portable than visual references tied to a single tool.

Frequently Asked Questions

Does FLUX have a built-in style reference feature like Midjourney's --sref?

No — FLUX (as of 2026) does not have a native style reference parameter equivalent to Midjourney's --sref. Style consistency in FLUX relies on text-based specifications, seeds for exact reproducibility, and prompt architecture. A well-written text style spec is therefore particularly important for FLUX workflows.

Which FLUX version should I use for the most consistent style results?

FLUX Pro (commercial) generally produces the most consistent style adherence because it follows text prompts with higher fidelity. FLUX Dev (open model) is more varied but still benefits significantly from structured style specifications. The techniques in this guide apply to all FLUX variants, though effectiveness varies by model generation.

Can I use the same style spec for both FLUX and Midjourney?

Yes — a text-based style specification works in both. You may want to append Midjourney-specific parameters (--ar, --style, --v) to the end of Midjourney prompts, but the style spec itself transfers directly. This cross-model portability is the key advantage of a text spec over Midjourney's visual --sref parameter.

How long should a FLUX style prefix be?

100–250 words for the style block is a good target. Long enough to define all key dimensions precisely; short enough that the content description receives sufficient processing weight. Extremely long style blocks (500+ words) can crowd out content descriptions and produce style-heavy but content-imprecise outputs.

I tried detailed FLUX prompts and the outputs still vary. What am I doing wrong?

The most common causes: (1) Style language is too evocative and not technical enough — "moody" varies; "desaturated, cool temperature lighting, deep shadows" is more reliable. (2) Style and content descriptions are mixed rather than separated — put all style language before content. (3) The style spec is not identical across sessions — even small rephrasing changes how FLUX parses it. Copy-paste exactly, do not rewrite from memory.

FLUX's strength — its exceptional text adherence — is also what makes style consistency a deliberate practice rather than a default behavior. You need to be explicit about what you want, and consistently explicit across every prompt.

The work of building a style specification pays off every session. Instead of re-prompting and correcting variance, you paste your spec and get consistent results. And because the spec is text-based, it travels: the same document works in FLUX, Midjourney, DALL-E, and any other tool you adopt next.