Midjourney Style Reference: The Complete Guide

How to use Midjourney style references — from the --sref parameter and style tuner to external style specs that work across every AI tool, not just Midjourney.You got the perfect Midjourney result. Now you need three more images that match its exact visual feel — the same palette, the same lighting, the same texture. They all drift.

This is the core challenge with Midjourney style reference work: the tool is exceptional at creating images from prompts, and mediocre at creating visually consistent images across prompts. Style variance is built into how diffusion models generate images, and Midjourney is no exception.

A Midjourney style reference is a method for maintaining visual consistency across multiple AI image generations. Midjourney offers three native tools for this: the --sref parameter (style reference image), the --cref parameter (character reference), and the Style Tuner. Each works differently and suits different use cases.

The --sref parameter in Midjourney lets you reference an existing image to influence the style of a new generation. You append --sref [image URL] to your prompt, and Midjourney uses that image's visual qualities — colors, texture, mood, composition — as a stylistic guide for the output. It works best for visual style transfer, not for character or subject consistency (use --cref for that).

This guide covers all three native Midjourney tools for style consistency — --sref, --cref, and the Style Tuner — with honest assessments of their limitations. It then covers a more powerful approach for serious cross-tool creative work: a text-based style specification that works in Midjourney and every other AI image tool.

Why Midjourney Generates Different Results Every Time

Diffusion models like Midjourney generate images by starting from random noise and progressively denoising toward an image guided by your prompt. The randomness — controlled by a seed value — determines where the process starts, and small differences in starting noise produce significantly different outputs.

The --seed parameter fixes this starting noise. With an identical prompt and seed, you get an identical image. Change anything in the prompt — even a single word — and the seed's consistency breaks. This is why seed-locking is useful for exact reproduction but insufficient for midjourney style reference work. You want different prompts with the same visual feel, not the same prompt with the same image.

The attributes that vary most between generations are the ones that define a visual identity: lighting direction and temperature shift between runs, color palette saturation and hue relationships change, composition and framing differ, texture oscillates between photographic and painterly. The subject matter stays roughly consistent, but the visual treatment drifts.

This is what Midjourney's style reference tools — --sref, --cref, and Style Tuner — were designed to address.

Method 1: The --sref Parameter (Midjourney Style Reference)

The --sref parameter references an existing image to borrow its visual style for a new generation. The model attempts to transfer the reference image's aesthetic qualities without copying the subject.

Syntax:

/imagine [your prompt] --sref [image URL]

For multiple references:

/imagine [your prompt] --sref [URL1] [URL2]

Style weight (--sw): Controls how strongly the midjourney style reference influences the output. Range is 0–1000, default 100. Higher values increase style influence but may override prompt specificity.

How to use --sref effectively:

- Upload or find a reference image that captures your target style

- Get a direct image URL (from Midjourney's own outputs, or upload to a hosting service)

- Add

--sref [URL]at the end of your prompt - Adjust

--swif the style influence is too strong or too weak

Strengths: Built-in, free, visually intuitive. If you already have a reference image, this is the fastest path to style influence.

Limitations:

- Visual-only reference. No text-based style definition — you cannot encode rules like "never use warm orange tones" or "always use hard shadows."

- Midjourney-only. Useless for maintaining style consistency in FLUX, DALL-E, ChatGPT, or any other tool.

- Single-moment capture. The reference image captures one instance of your style — it cannot represent abstract rules, constraints, or vocabulary that define a broader aesthetic.

- Quality-dependent. Style transfer fidelity varies significantly based on the reference image quality and the complexity of the style being transferred.

- Cannot encode negative rules. An image reference cannot express "never use lens flare" or "avoid saturated warm tones" — only positive attributes transfer.

Method 2: Character Reference (--cref) and Style Tuner

The --cref Parameter

--cref maintains character or subject consistency — keeping the same person, creature, or product across generations, not just the style.

/imagine [prompt] --cref [character image URL]

Character weight (--cw): Controls matching strength — 0 uses only the style of the reference, 100 enforces strong character similarity.

--cref and --sref can be combined for both character AND style consistency:

/imagine [prompt] --sref [style URL] --cref [character URL]

Best for: Illustration series with recurring characters, brand mascots, product photography with consistent subjects.

The Style Tuner

The Style Tuner is an interactive feature that generates style variations from a base prompt, letting you select preferred aesthetic directions and create a reusable style code.

How it works:

- Run

/tune [your prompt]in Discord - Midjourney generates a grid of style variants

- Select the variants that match your preferred aesthetic

- Midjourney creates a

--style [code]parameter to reuse

Strengths: Visual, intuitive, generates a reusable code that you can share.

Limitations: Style Tuner codes are prompt-dependent and may not generalize to very different subject matter. Codes can stop working after Midjourney model updates — they are tied to a specific model version. And like --sref, the Style Tuner is Midjourney-only.

--sref vs. --cref vs. Style Tuner vs. External Style Specification

| Feature | --sref | --cref | Style Tuner | Text Style Spec |

|---|---|---|---|---|

| Purpose | Visual style transfer | Character consistency | Curated style code | Full style definition |

| Input | Image URL | Image URL | Prompt-based tuning | Structured text blocks |

| Reusable across prompts | Yes (with variance) | Yes (character only) | Yes (limited) | Yes (high fidelity) |

| Works in other AI tools | No | No | No | Yes — all tools |

| Encodes negative rules | No | No | No | Yes |

| Explicit creative direction | No — model infers | No — model infers | Partial | Full control |

| Survives model updates | Usually | Usually | Sometimes breaks | Always |

| Shareable with team | Image URL only | Image URL only | Style code | Full text document |

What None of These Solve: Cross-Tool Midjourney Style Reference

All three Midjourney style reference tools — --sref, --cref, and Style Tuner — share the same fundamental limitation: they exist only within Midjourney.

They solve nothing for:

- Using the same visual style in DALL-E, Firefly, or maintaining consistent style in FLUX

- Maintaining a consistent brand voice in corresponding AI-generated copy — keeping your brand voice consistent in ChatGPT requires a separate approach entirely

- Briefing a team member or client on your visual style when they use a different AI tool

- Archiving a style so you can return to it reliably in six months

The deeper problem: these tools are all reactive. You reference an image, or tune from a generated output. There is no place to explicitly state: "My palette is exclusively cool grays and acid green. Lighting is always side-lit with hard shadows. Never use lens flare." You cannot define rules — only reference examples.

What professionals actually need is a portable, text-based style specification that functions as explicit creative direction — precise enough to constrain any AI model, structured enough to be maintained and shared.

Method 3: A Text-Based Style Specification (Works Everywhere)

An external style specification defines your visual identity as a reusable document you can apply in any AI tool. But the format matters — what works best in Midjourney looks different from what works in ChatGPT or FLUX.

Midjourney-native format:

Midjourney parses prompts as comma-separated keywords with --parameters at the end. A Midjourney-optimized style spec follows this convention:

/imagine prompt: [YOUR SUBJECT/SCENE PROMPT HERE], quiet,

contemplative, low energy, restrained, cool stone gray, warm ivory,

deep navy, 20-50% saturation, soft directional daylight, 5200K,

0.6 fill ratio, left-third subject placement, 30% negative space,

eye-level perspective, high-resolution photographic texture,

visible material surfaces --ar 1:1 --sref [your reference URL]

--no warm orange, lens flare, HDR processing

Notice: no HARD CONSTRAINT header, no labeled LIGHTING: or PALETTE: sections. Midjourney does not parse those structures — it reads a stream of keywords and parameters. StyleRef generates this format automatically when you export for Midjourney: your style blocks become comma-separated keywords, your inspiration images become --sref references, your aspect ratio becomes --ar, and your guardrails (things to avoid) become --no exclusions.

How this complements --sref:

Used together, the text spec + --sref parameter is more powerful than either alone. The --sref image provides an implicit visual target; the keyword spec provides explicit constraints the image cannot encode. "Always 30% negative space at top" or "avoid warm orange tones" — these rules survive as --no parameters and positioning keywords even when the --sref image is ambiguous.

Cross-tool portability — one style, different formats:

StyleRef stores your visual identity as structured blocks internally. When you export, it generates the right format for each tool:

- Midjourney: Keywords +

--sref+--ar+--noparameters (matches/imaginesyntax) - FLUX: Natural prose with hex colors inline and Style/Mood annotations (matches FLUX's caption-trained model)

- ChatGPT / Claude:

STYLE REFERENCE — HARD CONSTRAINTwith labeled sections and enforcement rule (optimized for instruction-following LLMs)

Update your style once in StyleRef and every model-specific format updates. This is the real portability — not pasting the same generic text everywhere and hoping each model interprets it the same way.

For a deeper look at how to translate professional AI art direction principles into structured specifications, see the companion guide.

This is exactly the problem StyleRef solves — build your style spec in 60 seconds →

How to Build a Midjourney Style Reference That Works in Any AI Tool

Step 1: Choose your style exemplar

Pick 1–3 existing images that represent your ideal aesthetic — Midjourney outputs you are proud of, reference photography, existing brand assets. These are your ground truth.

Step 2: Analyze and extract

For each exemplar, systematically identify: color palette (hues, saturation, value range), lighting (direction, intensity, temperature, quality), composition (subject placement, negative space, perspective), texture (surface details, material qualities), and mood (emotional register, energy level).

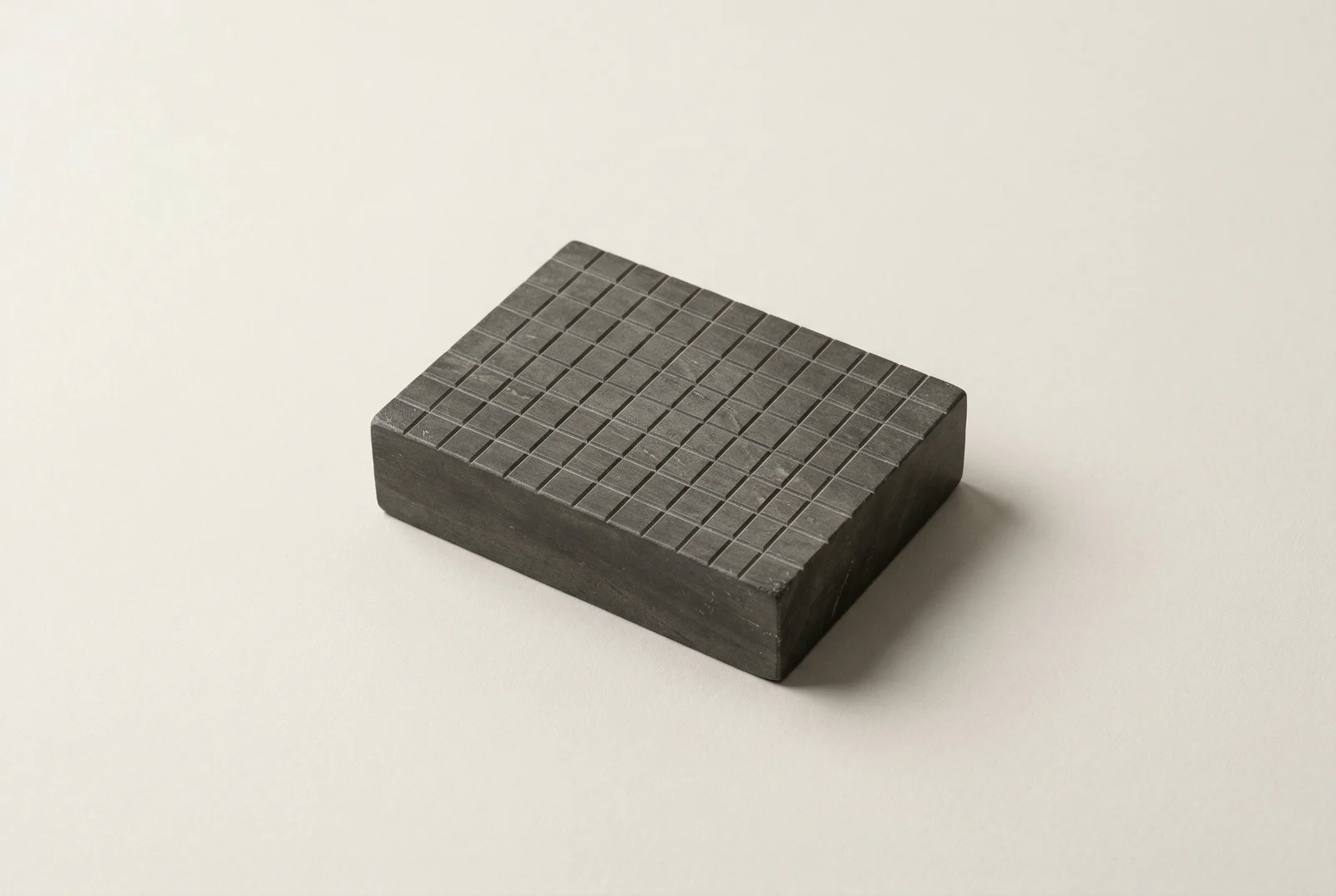

If you use StyleRef: upload the image and it extracts all dimensions automatically, then formats the output as Midjourney-native keywords + parameters — ready to paste into /imagine. Your inspiration images automatically become --sref references, and your visual constraints become --no exclusions. You can see the Kokeshi Toy StyleRef or the 80s Poster visual style spec for examples.

Step 3: Write explicit rules, not descriptions

- Too vague: "Warm and inviting images with natural light"

- Effective:

LIGHTING: Soft, diffused top light. Color temperature 4500K. No hard shadows. Fill ratio minimum 0.7.

The more explicit, the more reliable — in Midjourney and every other model.

Step 4: Add negative constraints

Define what your style explicitly excludes: "No lens flare," "No HDR processing," "No blue-tinted shadows." Negative constraints trim the model's default tendencies and are often more useful than positive descriptions alone.

Step 5: Test across tools and iterate

Run the spec in Midjourney with 5 different prompts. Does the style hold? Run the same spec in FLUX or DALL-E. Does the aesthetic translate? Note any dimensions that drift and add more explicit rules for those.

Frequently Asked Questions

What's the difference between --sref and --cref in Midjourney?

--sref references an image for style transfer — visual qualities like color, texture, and lighting. --cref references an image for character consistency — keeping the same person, creature, or subject across generations. They can be combined: --sref [style URL] --cref [character URL].

Can I use a Midjourney style reference in other AI image tools like FLUX or DALL-E?

Not directly — --sref is a Midjourney-only parameter. For cross-tool consistency, you need a text-based style specification. StyleRef handles this by storing your style as structured blocks and generating the right format per tool: keywords + --sref for Midjourney, natural prose for FLUX, labeled sections for DALL-E. A single style definition, multiple model-native outputs.

How do I keep Midjourney consistent across a whole campaign?

Use a combination of a style specification (for aesthetic rules) and --cref (if the campaign features recurring characters or subjects). Save your style spec as a text file — paste it as a prompt prefix for every generation. This ensures that even if team members or different sessions produce images, they follow the same visual rules.

Does the Midjourney Style Tuner work reliably?

Style Tuner codes work well within a session and for similar prompts, but can degrade for very different subject matter. They are also tied to a specific Midjourney model version — codes from older versions may produce different results after an update. For long-term style consistency, a text-based spec is more durable.

How strong should I set --sw (style weight)?

Default --sw 100 is a good starting point. Increase (up to 1000) if your style reference is not being applied strongly enough. Decrease if the reference is overriding your prompt's subject matter. Values above 500 can cause the style to overwhelm the content.

Can I use someone else's Midjourney image as a --sref?

Technically yes — any accessible image URL works as a --sref. However, be aware of intellectual property considerations when using outputs generated by others. For brand work, using your own outputs or style-extracted specs is cleaner and avoids potential issues.

Midjourney's native tools — --sref, --cref, and the Style Tuner — are genuinely useful and worth mastering. They are the right choice for Midjourney-only workflows where you need quick style influence without leaving the tool.

For multi-tool creative work — images plus copy, multiple AI platforms, team collaboration — a text-based style specification is the more complete solution. It encodes rules that visual references cannot express, transfers across every AI tool, and gives you an auditable record of your creative direction.

StyleRef builds that specification for you from reference images or brand materials. Skip the manual extraction — upload a reference and get a structured style spec in about 30 seconds.